Artificial Intelligence Composes Music

By Russell E Glaue, May 12, 2011

Under the guidance from Dr. BJ Lee, WIU School of Computer Sciences.

With research sponsorship from Center for the Application of Information Technologies (CAIT), Western Illinois University.

These are preliminary discoveries of my research. Research is currently ongoing.

Computers have been provisioned by CAIT for running my AI models.

The Samples

The research continues, but I have some preliminary test results of a

computer artificial intelligence (AI) model trained to compose music. I have

posted one sample here for general review of my research. (2011-04-23)

I am currently training new models with more learning capacity.

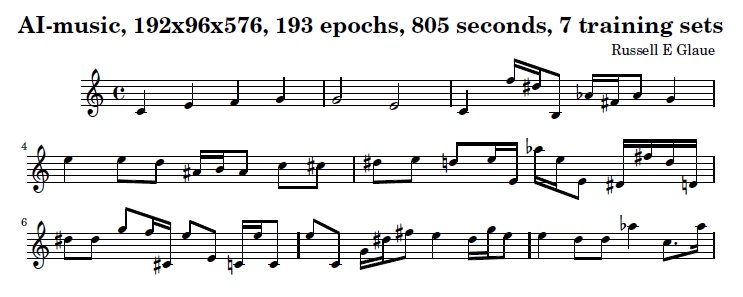

The first two measures is my input to the AI model software program.

The remaining six measures is what the computer AI model software program composes.

This is the first attempt using a simple AI model.

Though there may be seen some musical flow, as opposed to random notes, I do

not consider the output to be musical. The output does not even follow proper

Music Theory. And the output doesn't relate to the musical-idea of the input

as I expect it should. So I consider this model to be a failure.

However, the issue may be only because there is not enough learning capacity in the Model.

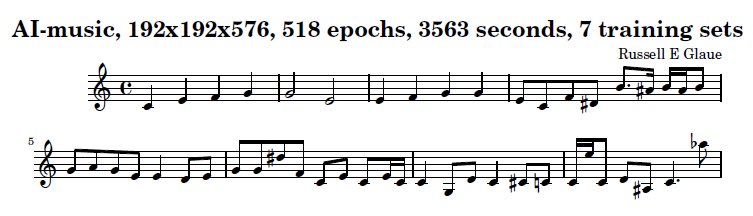

This musical output is much improved from the output produced in the 192x96x578 model.

The flow of the music is much improved.

The idea from the first two measures is carried on into the third measure.

There are a lot fewer accidentals (sharp and flat notes)

However, there should be no accidentals or at least only one or so which fits

into the musicality of the piece. This output has 7 accidentals (5+1 sharps

and 1 flat) and they do not fit musically.

The musical output *almost* follows good music theory for composition. It does

build off of the C note and the chords of it, but it is not completely there.

So I am going to assume an AI NN model with more more learning capacity will

produce something more satisfying of music theory principles for composition.

My Approach

The AI model will be trained to accept the first two measures of a musical excerpt, and produce the following six measures of the same musical excerpt. After a series of training, the AI model will be given an unknown measure of music, and will be tested to see if the results are not only musical, but adhere to music theory.

It cannot be expected that the AI model is given the first two measures of some unknown musical selection and be expected to produce the following six measures. Music is random, or at least unexpected as every composer does not follow some exact equation for producing musical ideas.

Instead, what can be measured is two aspects which one is quantitative, and the other qualitative. I will test the musical output to find if it adheres to correct musical theory (quantitative), and if I feel it sounds musical to some degree (qualitative).

It can be expected that the musical excerpt produced by the AI model does adhere to correct music theory as the music it will be trained on also adheres to music theory principles. However, it is unknown what the AI composed music will sound like. The Musical excerpts used as training data are from various composers in various styles. And the AI model will learn only to produce music based on this training data that varies in style, rhythm, and musical idea. The output is likely to be some combination of all these features of the training data, which may produce an undesirable sounding composition.

Translation between Human Readable and Computer Readable

I use the software program Lily Pond (http://www.lilypond.org) for the basis of translating composed music to text. Then I convert the music in Lily Pond fomat into binary for the AI input. The reverse is used for translating the AI output to readable composed music.

I wrote a program to perform the actual translation between formats. The Lily Pond software is based off a textual representation of music that is translated into a "beautiful" design composition score.

This is a simple textual representation in Lily Pond of the song "Mary Had a Little Lamb":

e4 d4 c4 d4 e4 e4 e2 d4 d4 d2 e4 g4 g2 e4 d4 c4 d4 e4 e4 e4 e4 d4 d4 e4 d4 c1

Mary had a little lamb, little lamb, little lamb, mary had a little lamb its fleece was white as snow.

The character string e4 for example represents the note E (e) as a quarter note (4), the string g2 as the note G (g) as a half note (2), and the string c1 as the note C (c) as a whole note (1). This musical excerpt is all within one octave, and there are no rest notes.

Five bit representation of notes

Since the music input is all transposed into the key of C, the chromatic scale and thus octaves are designated by that key. The binary representation of 31 notes and 1 rest within 5 bits will be:

00000 = D (lower octave, or octave 1)

00001 = D# or Eb

00010 = E

00011 = F

00100 = F# or Gb

00101 = G

00110 = G# or Ab

00111 = A

01000 = A# or Bb

01001 = B (lower octave, or octave 1)

01010 = C (middle octave, or octave 2)

01011 = C# or Db

01100 = D

01101 = D# or Eb

01110 = E

01111 = F

10000 = F# or Gb

10001 = G

10010 = G# or Ab

10011 = A

10100 = A# or Bb

10101 = B (middle octave, or octave 1)

10110 = C (upper octave, or octave 3)

10111 = C# or Db

11000 = D

11001 = D# or Eb

11010 = E

11011 = F

11100 = F# or Gb

11101 = G

11110 = G# or Ab (upper octave, or octave 3)

11111 = the rest note

One bit to define a notes duration within a sequence

The 6th bit of a input and output group defines if the current note division of the group (16th note division) is a continuation of the note from the previous group. 0 is off, and 1 is on. Thus if the previous group represents the note A, the current group also represents the note A, and the duration bit is set to 0 in the previous group and 1 in the current group, the resulting translation of the output combines these two 16th A notes into one 8th A note.

So the value 0 turns a "note accumulation" flag off for the current group, meaning it is the start of a new note. Then, for every group that follows and has a value of 1 keeps a "note accumulation" flag on so that the duration of all notes in the sequence for which the value is 1 is concated together to form one longer note.

The immediately understood negative aspect of this format is that a series of triplet notes cannot be accounted for. This is a series of three notes played within one count duration that is typically constructed of a series of notes divisible by 2.

REG - © 2011, All rights reserved

REG - © 2011, All rights reserved

REG - © 2011, All rights reserved

REG - © 2011, All rights reserved